Quick access to articles on this page:

more on the next page...

Facebook has released their TLS 1.3 library Fizz in open source. In their post they mention early data (0-RTT):

Using early data in TLS 1.3 has several caveats, however. An attacker can easily replay the data, causing it to be processed twice by the server. To mitigate this risk, we send only specific whitelisted requests as early data, and we’ve deployed a replay cache alongside our load balancers to detect and reject replayed data. Fizz provides simple APIs to be able to determine when transports are replay safe and can be used to send non-replay safe data.

My guess is that either all GET requests are considered safe, or only GET requests on the / route are considered safe.

I'm wondering why they use a replay cache on the other side as this overhead could nullify the benefits of 0-RTT.

They also mention every state transitions being stored in one place, this is true:

FIZZ_DECLARE_EVENT_HANDLER(

ClientTypes,

StateEnum::Uninitialized,

Event::Connect,

StateEnum::ExpectingServerHello);

FIZZ_DECLARE_EVENT_HANDLER(

ClientTypes,

StateEnum::ExpectingServerHello,

Event::HelloRetryRequest,

StateEnum::ExpectingServerHello);

FIZZ_DECLARE_EVENT_HANDLER(

ClientTypes,

StateEnum::ExpectingServerHello,

Event::ServerHello,

StateEnum::ExpectingEncryptedExtensions);

I think this is a great idea, which more TLS libraries should emulate. I had started a whitelist of transitions for TLS 1.3 draft 18 here but it's probably outdated.

You sometimes don't want or can't use TCP, and you thus have to deal with UDP.

To Simplify, TCP is just a collection of algorithms that extend UDP to make it support in-order delivery of streams. UDP on the other hand does not care about such streams and instead sends blocks of messages (called datagrams) in whatever-order and provides no guarantee what-so-ever that you will ever receive them.

TCP also provides some security guarantees on top of IP by starting a session with a TCP handshake it allows both endpoints of a communication to provide a proof of IP ownership, or at least that they can read whatever is sent to their claimed IPs. This means that to mess up with TCP, you need to be an on-path attacker man-in-the-middle'ing the connection between the two endpoints. UDP has none of that, it has no notion of sessions. Whatever packets are received from an IP, it'll just accept them. This means that an off-the-path attacker can trivially send packets that look like they are coming from any IP, effectively spoofing the IP of either one of the endpoint or anyone on the network. If the protocol built on top of UDP does not do anything to detect and prevent this, then bad things might happen (from complex attacks to simple denial of services).

This is not the only bad thing that can happen though. Sometimes (meaning for some protocols) a well-crafted message to an endpoint will trigger a large and disproportionate response. Malicious actors on the internet can use this to perform amplification attacks, which are denial-of-service attacks. To do that, the actor can send these special type of messages pretending to be a victim IP and then observe the endpoint respond with a large amount of data to the victim IP.

Intuitively, it sounds like both of these issues can be tackled by doing some sort of TCP handshake, but in practice it is rarely the case as the very first message of your protocol (which hasn't been able to provide a proof of IP ownership yet) can still trigger large messages. This is why in QUIC, the very first message from a client needs to be padded with 0s in order to make it as large as the server's response. Meaning that an attacker would have to at least spend as much resources that is provided by the attack, nullifying its benefits.

Looking at another protocol built on top of UDP, DTLS (TLS for UDP) has a notion of "cookie" which is really some kind of bearer token that the client will have to keep providing to the server in relevant messages, this in order to prove that it is indeed the same endpoint talking to the server.

TLS 1.3 has been released as RFC 8446. It took 28 drafts and more than 4 years since draft 0 to come out. Cloudflare has a long blog post about it. Some questions about the deployment of 1.3:

- Will we see a fast deployment of the protocol? It seems like browsers are ready, but web servers will have to follow.

- Who will use 0-RTT? I'm expecting the big players to use it (largely because they've been requesting it) but what about the small ones?

- Are we going to see vulnerabilities in the protocol? It seems highly unlikely, TLS 1.2 itself (with AES-GCM) has remained solid for more than 10 years.

- Are we going to see vulnerabilities in the implementations? We will see about that. If anything happens, I'm expecting it to happen around 0-RTT, PSKs and key exports. But let's hope that libraries have learned their lessons.

- Is BearSSL going to implement TLS 1.3? It sounds like it.

Someone wrote a blogpost about man-in-the-middling WhatsApp.

First, there is nothing new in being able to man-in-the-middle and decrypt your own TLS sessions (+ a simple protocol on top). Sure the tool is neat, but it is not breaking WhatsApp in this regard, it is merely allowing you to look at (and to modify) what you're sending to the WhatsApp server.

The blog post goes through some interesting ways to mess with a WhatsApp group chat, as it seems that the application relies in some parts on metadata that you are in control of. This is bad hygiene, but for me the interesting attack is attack number 3: you can send messages to SOME members of the group, and send different messages to OTHER members of the group.

At first I thought: this is nothing new. If you read the WhatsApp whitepaper it is a clear limitation of the protocol: you do not have transcript consistency. And by that I mean, nothing is cryptographically enforcing that all members of a group chat are seeing the exact same thing.

It is always hard to ensure that the last messages have been seen by everyone of course (some people might be offline), but transcript consistency really only cares about ordering, dropping, and tampering of the messages.

Let's talk about WhatsApp some more. Its protocol is very different from what Signal does and in group chats, each member shares their unique symmetric key with the other members of the group (separately). This means that when you join a group with Alice and Bob, you first create some random symmetric key. After that, you encrypt it under Alice's public key and you send it to her. You then do the same thing with Bob. Once all the members have knowledge of your random symmetric key, you can encrypt all of your messages with it (perhaps using a ratchet). When a member leaves, you have to go through this dance again in order to provide forward secrecy to the group (leavers won't be able to read messages anymore). If you understood what I said, the protocol does not really gives you way to enforce transcript consistency, you are in control of the keys so you choose who you encrypt what messages to.

But wait! Normally, the server should distribute the messages in a fan-out way (the server distributes one encrypted message to X participants), forcing you to collude with a root@WhatsApp in order to perform this kind of shenanigans. In the blog post's attack it seems like you are able to bypass this and do not need the help of WhatsApp's servers. This is bad and I'm still trying to figure out what really happened.

By the way, to my knowledge no end-to-end encrypted protocol has this property of transcript consistency for group chats. Interestingly, the Messaging Layer Security (MLS) which is the latest community effort to standardize a messaging protocol does not have a solution for this either. I'll probably talk about MLS in a different blog post because it is still very interesting.

The last thing I wanted to mention is trust inside of a group chat. We've been trying to solve trust in a one-to-one conversation for many many years, and between PGP being broken and the many wars between the secure messaging applications, it seems like this is still something we're struggling with. Just yesterday, a post titled I don't trust Signal made the front page on hackernews. So is there hope for trust in a group chat anytime soon?

First, there are three kinds of group chat:

- large group chats

- medium-sized group chats

- small group chats

I'll argue that large group chats have given up on trust, as it is next to impossible to figure out who is who. Unless of course we're dealing with a PKI and a company enforcing onboarding with a CA. And even this is has issues (beyond the traitors and snoops).

I'll also argue that small group chats are fine with the current protocols, because you're probably trusting people not to run this kind of attacks.

The problem is in medium-sized group chats.

If you don't know about QUIC, go read the excellent Cloudflare post about it. If you're lazy, just think about it as:

- Google wanted to improve TCP (2.0™️)

- but TCP can't really be changed

- so they built it on top of UDP (which is just IP with ports, check the 2 page RFC for UDP if you don't believe me)

- they made it with encryption by default

- and they called it QUIC, because it's quick, you know

There is more to it, it makes HTTP blazing fast with multiplexed streams and all, but I'm only interested about the crypto here.

Google QUIC's (or gQUIC) default encryption was provided by a home-made crypto protocol called QUIC Crypto. The thing is documented in a 14-page doc file and is more or less up-to-date. It was at some point agreed that things needed to get standardized, and thus the process of making QUIC an RFC (or RFCs) began.

Unfortunately QUIC Crypto did not make it and the IETF decided to replace it with TLS 1.3 for diverse reasons.

Why "Unfortunately" do you ask?

Well, as Adam Langley puts it in some of his slides. The protocol was dead simple:

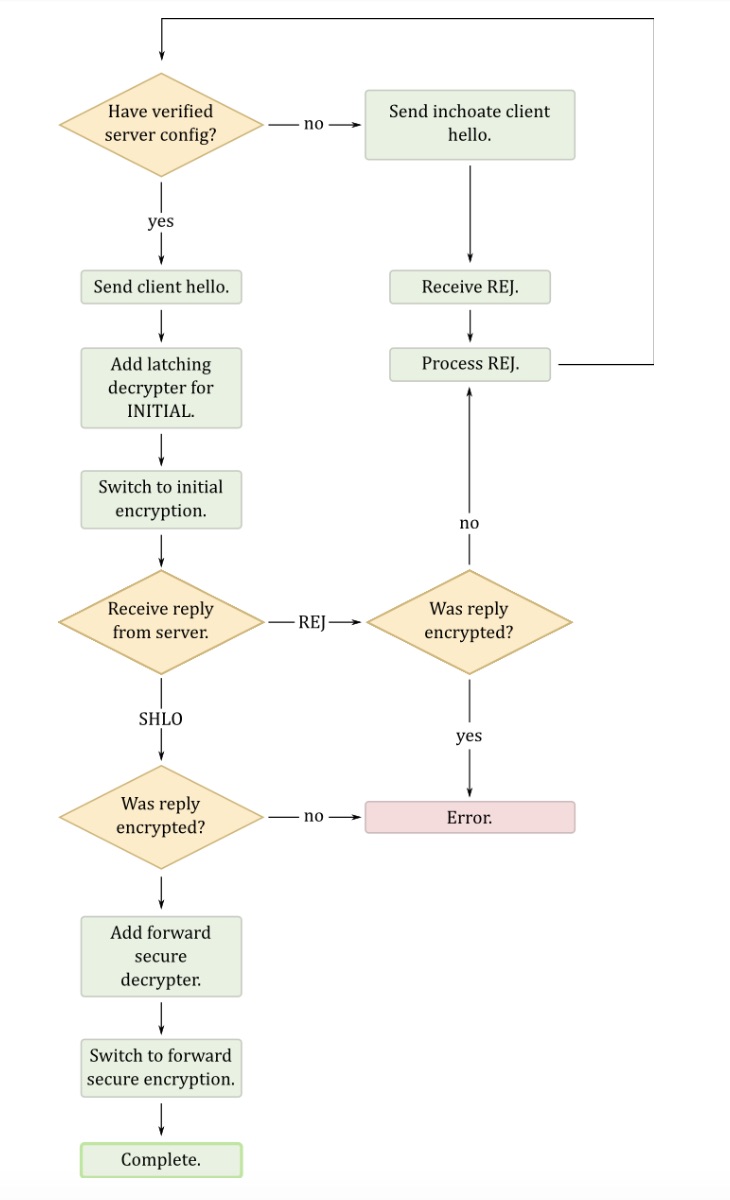

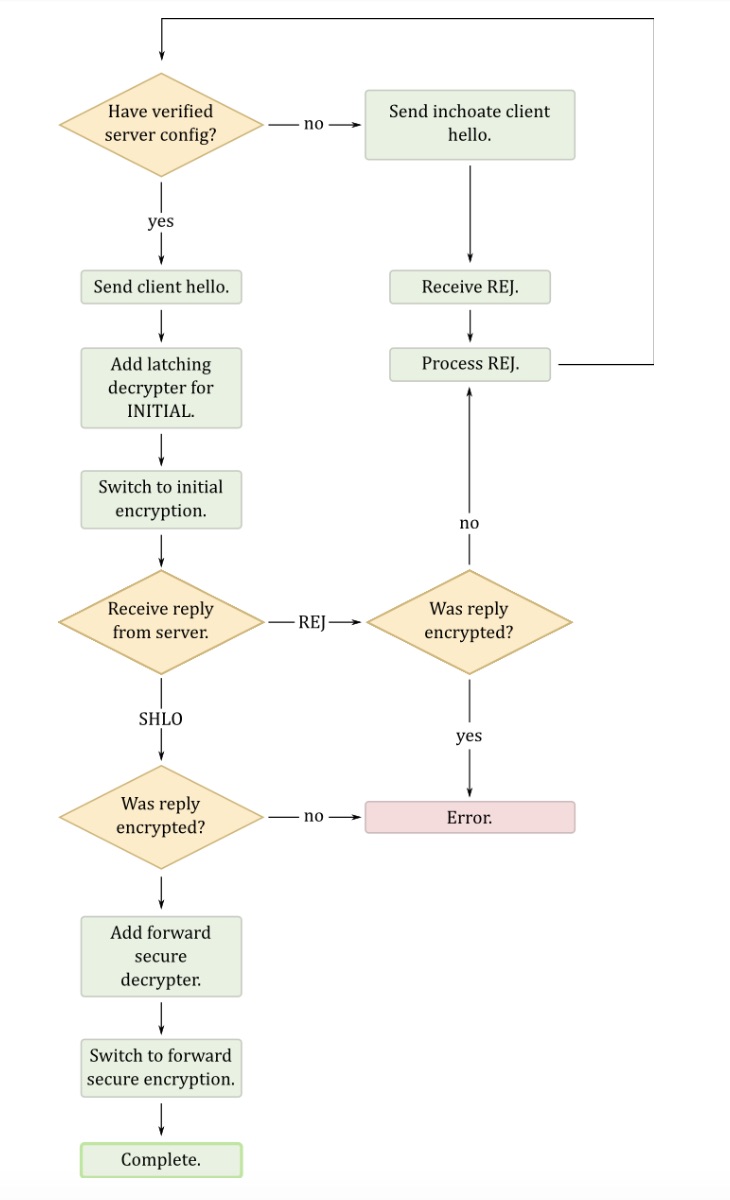

While the protocol had some flaws, in the end, it was still a beautiful and elegant protocol. At its core was an extremely straight forward and linear state machine summed up by this diagram:

A few things to help you read it:

- a server config is just a blob that contains the server current semi-ephemeral keys. The server config is rotated every X days.

- an inchoate client hello is just an empty client hello, which prompts the server to send a REJ(ect) message containing its latest config (after that the client can try again with a full client hello)

- SHLO is a (encrypted) server hello which contains ephemeral keys

As you can see there isn't much going on, if you know the keys of the server you can do some 0-RTT magic, if you don't then request the keys and start the handshake again.

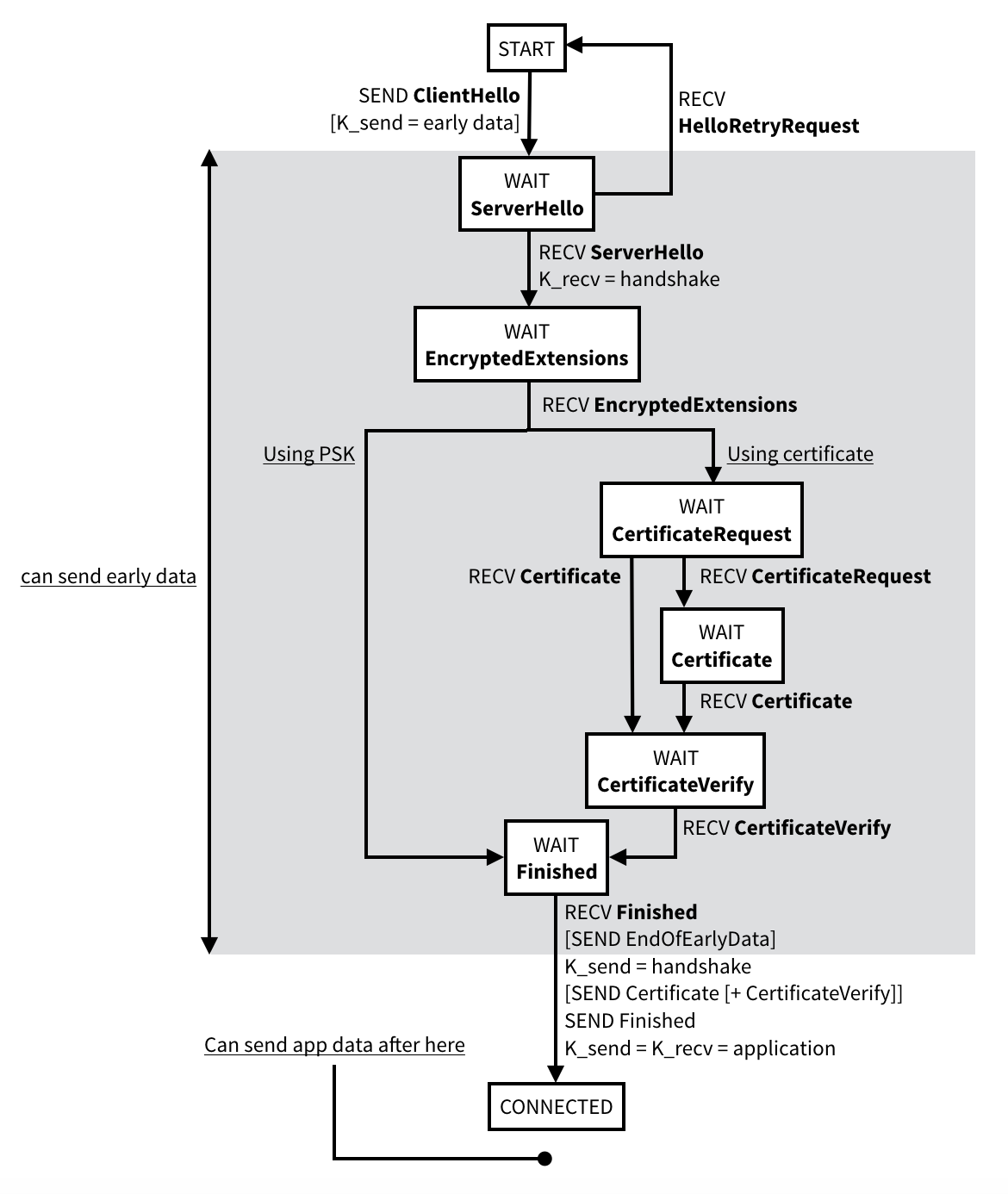

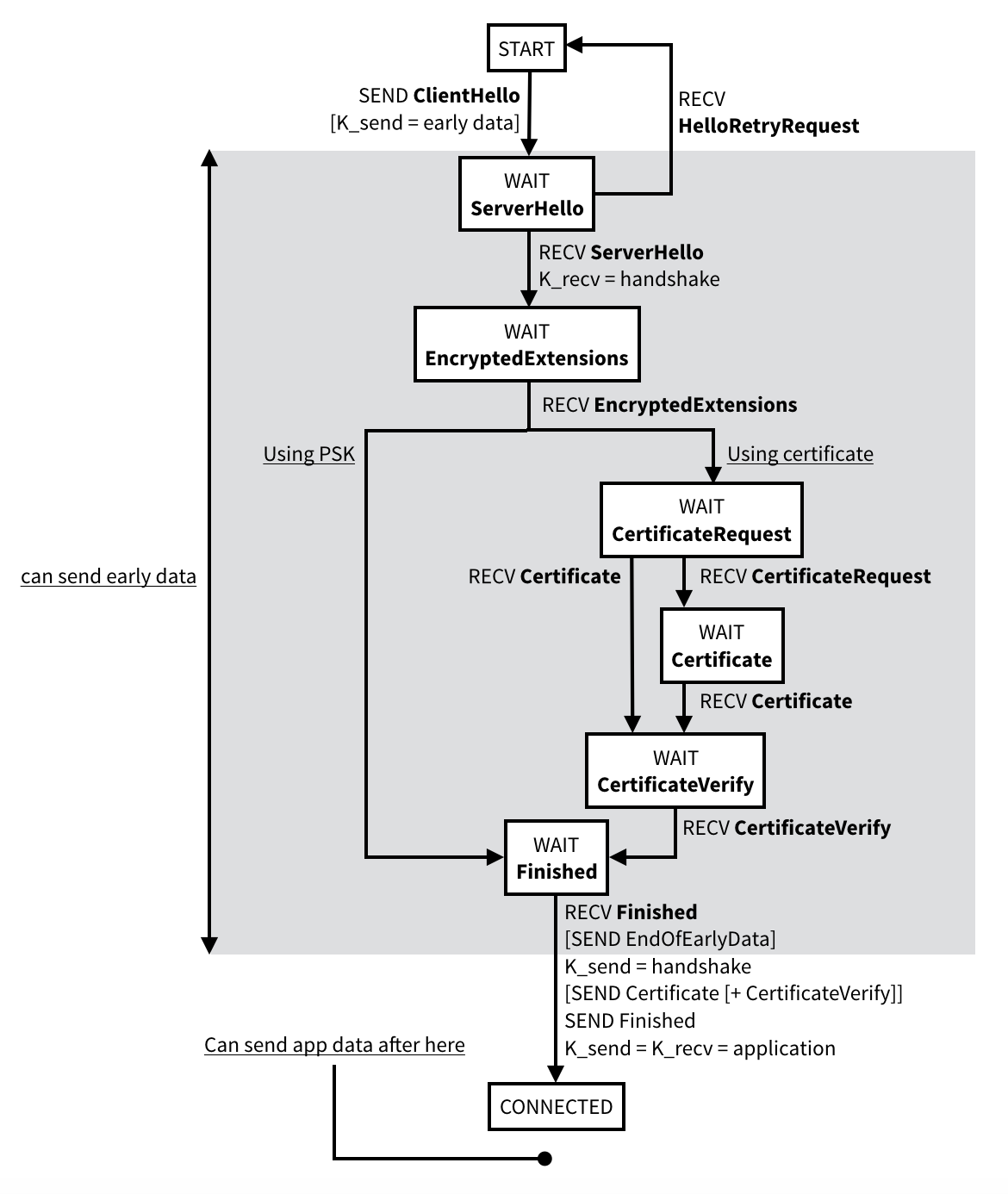

Compare that to the state machine of TLS 1.3:

In the end, TLS 1.3 is a solid protocol, but I'd like to see more experimentation here instead of just relying on TLS. version 1.3 is built on top of numerous previous failed versions which means a great amount of complexity due to legacy and a multitude of use cases and extensions it needs to support. Simpler protocols should be better, simple state machines make for better analysis and more secure implementations. Just look at the Noise protocol framework and its 1k LOC implementations and its symbolic proofs done with ProVerif and Tamarin. Actually, why haven't we started using Noise for everything?

Did you know that a bitcoin transaction does not have a recipient field?

That's right! when crafting a transaction to send money on the bitcoin network, you actually do not include I am sending my BTC to _this address_. Instead, you include a script called a ScriptPubKey which dictates a set of inputs that are allowed to redeem the monies. The PubKey in the name surely refers to the main use for this field: to actually let a unique public key redeem the money (the intended recipient). But that's not all you can do with it! There exist a multitude of ways to write ScriptPubKeys! You can for example:

- not allow anyone to redeem the BTCs, and even use the transaction to record arbitrary data on the blockchain (this is what a lot of applications built on top of bitcoin do, they "burn" bitcoins in order to create metadata transactions in their own blockchains)

- allow someone who has a password to use the BTCs (but to submit the password, you would need to include it in clear inside a transaction which would inevitably be advertised to the network before actually getting mined. This is dangerous)

- allow a subset of signatures from a fixed set of public keys to redeem the BTCs (this is what we call multi-sig transactions)

- allow someone who can break a hash function (SHA-1) to redeem the BTCs (This is what Peter Todd did in 2013)

- only allow the BTCs to be redeemed after some time in the future (via a timestamp)

- etc.

On the other hand, if you want to use the money you need to prove that you can use such a transaction's output. For that you include a ScriptSig in a new transaction, which is another script that runs and creates a number of inputs to be used by the ScriptPubKey I talked about. And you guessed it, in our prime use-case this will include a signature (the Sig in the name)!

Recap: when you send BTCs, you actually send it to whoever can give you a correct input (created by a ScriptSig) to your program (ScriptPubKey). In more details, a Bitcoin transaction includes a set of input BTCs to spend and a set of output BTCs that are now redeemable by whoever can provide a valid ScriptSig. That's right, a transaction actually uses many previous transactions to collect money from, and spread them in possibly multiple pockets of money that other transactions can use. Each input of a transaction is associated to a previous transaction output, along with the ScriptSig to redeem it. Each output is associated with a ScriptPubKey. By the way, an output that hasn't been spent yet is called an UTXO for unspent transaction output.

The scripting language of Bitcoin is actually quite limited and easy to learn. It uses a stack and must return True at the end. The limitations actually bothered some people who thought it might be interesting to create something more turing-complete, and thus Ethereum was born.

Hey you!

You want to teach someone about a crypto concept, something 101 that could be explained in 1-2 pages with a lot of diagrams? Look no more, we need you.

Concept

The idea is to have a recurrent benevolent e-magazine (like POC||GTFO) that focuses on:

- cryptography: duh! That being said, cryptography does include: implementations, cryptocurrencies, protocols, at scale, politics, etc. so there are more topics that we deem interesting than just theoretical cryptography.

- pedagogy: heaps of diagrams and a focus on teaching. Taking an original writing style is a plus. We're looking not to bore readers.

- 101: we're looking for introductions to concepts, not deeply technical articles that require a lot of initial knowledge to grasp.

- short: articles should be similar to a blog post, not a full-fledged paper. With that in mind articles should be around 1, 2 or 3 pages. We are not looking for something dense though, so no posters, rather a submission should be a light read that can be part of a series or influence the reader to read more about the topic.

Topics

Preferably, authors should write about something they are familiar with, but here is a list of topics that would likely be interesting for such a light magazine:

- what is SSH?

- what is SHA-3?

- what is functional encryption?

- what is TLS 1.3?

- what is a linear differential attack?

- what is a cache attack?

- how does LLL work?

- what are common crypto implementation tricks?

- what is R-LWE?

- what is a hash-based signature?

- what is an RFC?

- what is the IETF?

- what is the IACR?

- why are companies encrypting databases?

- what is x509, .pem, asn.1 and base64?

- etc...

Format

LaTeX if possible.

Deadline

No deadline at the moment.

How to submit

send me a dropbox link or something on the contact page, you can also send it to me via twitter

PS: I am going to annoy you if you don't use diagrams in your article

I will be at the Tamuro meetup in London tonight (at the George Inn pub). It's a meetup about security and cryptography. Feel free to join if you want to grab a beer and talk about supersingular isogenies.